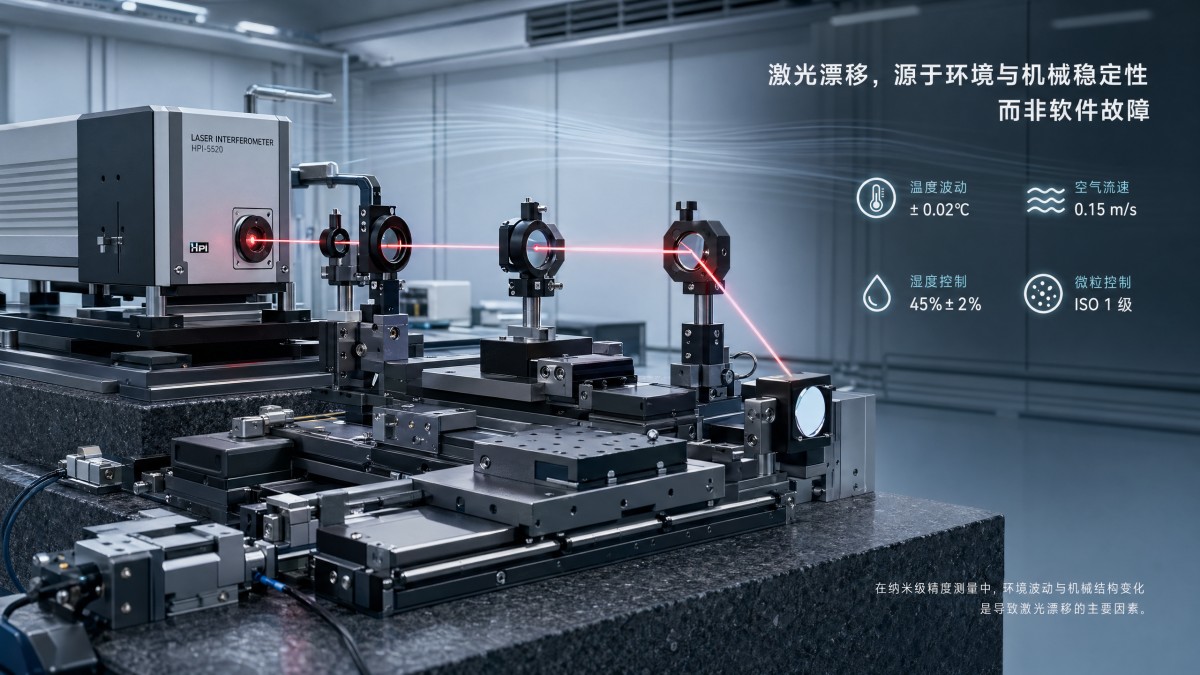

Laser-Interferometer drift is often not a software problem

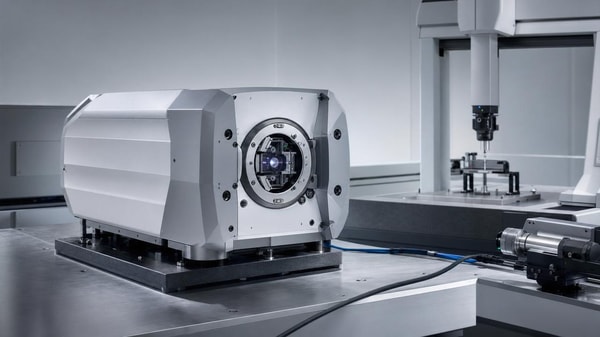

When Laser-Interferometer drift appears on the shop floor, many service teams first suspect software. In reality, the root cause often lies in environmental instability, alignment error, contamination, or mechanical behavior outside code logic. For after-sales maintenance personnel, understanding these physical sources is essential to faster troubleshooting, reduced downtime, and more reliable ultra-precision system performance.

Why the troubleshooting focus is shifting away from software-first assumptions

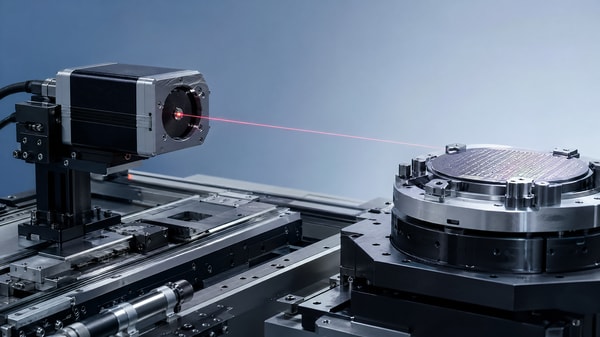

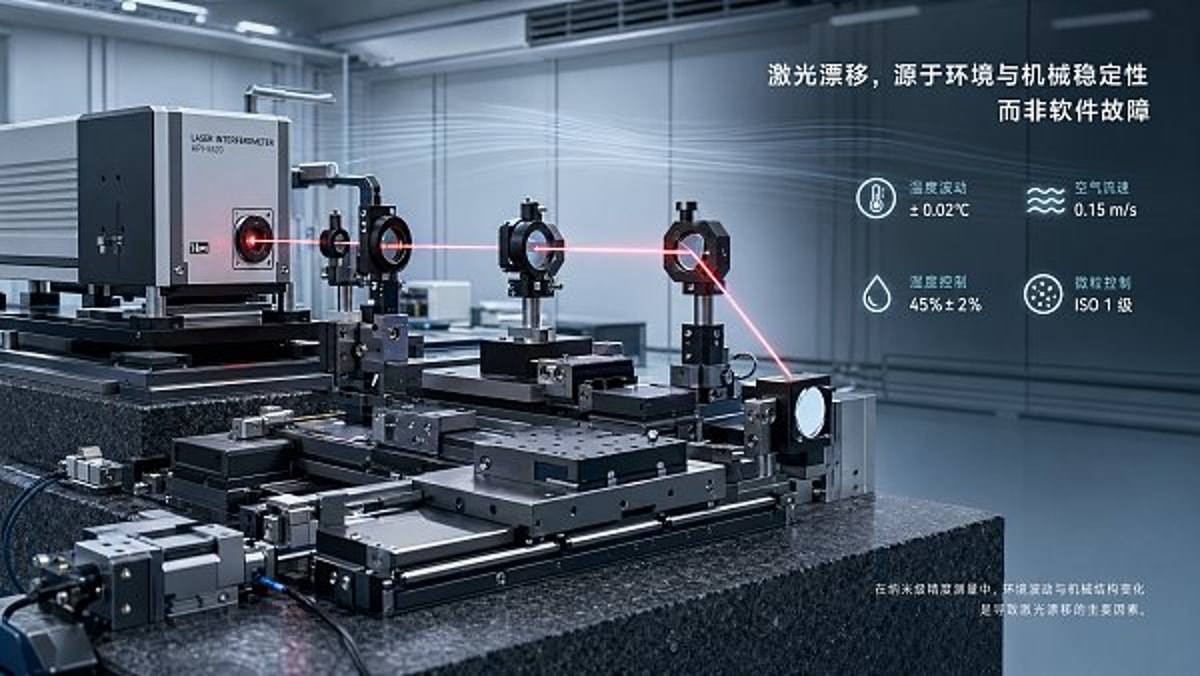

A clear industry change is underway in ultra-precision manufacturing and metrology: Laser-Interferometer drift is increasingly treated as a system-level stability issue rather than a code-level fault. This shift matters because service teams are now supporting tighter process windows, often in the sub-micron to nanometer range, where a 1 °C room change, a slight air turbulence pattern, or a small optical contamination layer can create measurement movement that looks digital but is fundamentally physical.

For after-sales maintenance personnel, this change affects daily work directly. In older service logic, software rollback, parameter reset, and controller reboot were often the first 3 steps. In current high-accuracy environments, those steps still have value, but they are no longer sufficient. The more advanced the machine, the more likely drift is tied to thermal gradients, refractive index variation, stage vibration, cable stress, or mounting relaxation over 24 to 72 hours of production.

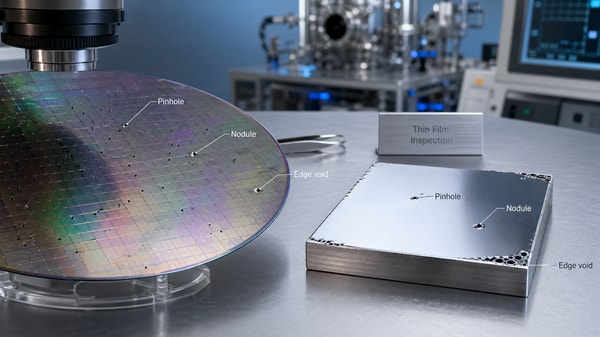

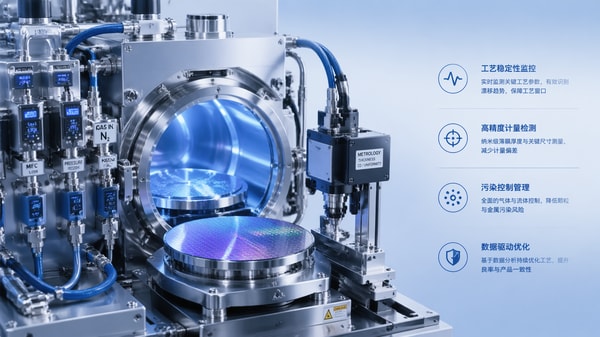

This is especially visible across semiconductor support tools, precision CMM platforms, nano-positioning assemblies, optics alignment stations, and high-end motion systems. As production lines move toward lower defect tolerance and more automated compensation, the hidden assumption that software can correct everything is losing ground. Laser-Interferometer performance now depends on the interaction of optics, mechanics, air conditions, control tuning, and maintenance discipline.

Common signals behind the shift

- Machine accuracy targets have tightened from general micron-level positioning toward sub-micron and nanometer-sensitive verification tasks.

- Service intervals have shortened in many factories from quarterly intervention to monthly or event-driven checks when uptime pressure is high.

- Facilities are running more mixed-load environments, where nearby HVAC cycles, compressed air demand, or operator traffic change local thermal and vibration behavior.

- Laser-Interferometer systems are more often integrated into broader machine architectures, increasing the number of interfaces where non-software drift can emerge.

The practical implication is simple: maintenance teams who treat drift as a multidisciplinary symptom can usually isolate root causes faster than teams who remain locked into a software-first diagnosis path. In many service cases, the problem is not that the software is wrong; it is that the physical world feeding the software has changed.

What is driving the increase in apparent Laser-Interferometer drift events

The rise in reported Laser-Interferometer drift is not necessarily evidence that systems are becoming less capable. In many cases, it reflects more demanding applications and more sensitive detection. A line that once tolerated ±2 µm may now be investigating deviations below 0.5 µm. Under those conditions, environmental effects that were previously invisible now become maintenance-critical.

Another driver is system density. Modern production areas often place precision metrology, motion stages, gas delivery equipment, fluid control subsystems, and clean utility infrastructure in tighter proximity. Even when each subsystem is compliant on its own, the combined thermal load and vibration signature can influence Laser-Interferometer behavior. For service engineers, this means troubleshooting must extend beyond the instrument enclosure and into surrounding process conditions.

A third factor is the growing use of compensation layers. Software compensation can mask a slowly developing physical problem for weeks or months, then suddenly fail when the drift pattern exceeds the compensation model. This creates a misleading timeline: the software appears to become unstable, but the actual change may have started earlier through contamination, bracket creep, beam path misalignment, or air refractive instability.

Main physical drivers now drawing more attention

The table below summarizes common drift drivers that are increasingly affecting Laser-Interferometer service work in integrated industrial environments.

The important reading of this table is that Laser-Interferometer drift often emerges from conditions that evolve gradually. Service teams that record only alarm history may miss those slow changes. Teams that add environmental logging, mounting review, and optical inspection usually build a more accurate failure picture.

Why this trend matters across a general industrial support model

In a comprehensive industrial environment like G-UPE’s focus areas, Laser-Interferometer performance rarely stands alone. Pneumatic actuation can influence vibration, coatings or residues can influence optics, metrology workflows can expose hidden instability, and nano-positioning systems can amplify sensitivity to small physical shifts. That interdependency is why drift analysis is becoming more cross-functional and less software-centered.

How the impact differs for after-sales maintenance teams, production users, and procurement stakeholders

The current Laser-Interferometer trend does not affect every stakeholder in the same way. After-sales maintenance personnel carry the first diagnostic burden, but production operators experience immediate downtime, while procurement and engineering managers feel the cost of repeated service calls, spare allocation, and delayed acceptance. Understanding these layered impacts helps maintenance teams communicate more effectively and justify broader root-cause checks.

For service staff, the biggest operational change is the need to gather more contextual evidence before adjusting software parameters. A machine may run correctly for the first 2 hours and drift after 6 hours, or pass static checks but fail during high-duty motion cycles. These patterns suggest that time-based and condition-based testing are now as important as controller diagnostics.

For production teams, the visible impact is often inconsistency rather than total failure. Scrap risk rises, verification cycles lengthen, and confidence in process capability declines. For procurement teams, repeated Laser-Interferometer issues can trigger questions about installation quality, environmental preparation, maintenance scope, and whether acceptance criteria were realistic for the intended application.

Impact by stakeholder group

The following comparison helps explain why Laser-Interferometer drift must be framed as a shared operational issue rather than a narrow service complaint.

A key takeaway is that better Laser-Interferometer support starts with better shared language. When maintenance reports include drift timing, local thermal conditions, recent mechanical intervention, and optical cleanliness status, decision-makers can respond with more precision. This reduces the repeated cycle of software changes that do not address the true source.

Where support conversations are changing most

- Acceptance testing is increasingly expected to include warm-up behavior over defined periods such as 30 minutes, 2 hours, and a full operating shift.

- Maintenance teams are being asked to verify not only sensor status, but also beam path integrity and environmental stability around the machine footprint.

- Procurement reviews now more often include supportability questions: spare optics, contamination control, calibration intervals, and environmental prerequisites.

Which diagnostic signals deserve closer attention in the next service cycle

One of the strongest trends in Laser-Interferometer maintenance is the move from symptom-based repair to signal-based diagnosis. Instead of asking only whether drift is present, effective teams ask when it starts, how fast it develops, whether it follows machine motion direction, and whether it correlates with temperature, airflow, or support structure conditions. These questions turn vague complaints into actionable service logic.

In practical terms, there are several warning signals that often indicate a non-software origin. If a Laser-Interferometer system is stable after reboot but degrades after thermal soak, physical expansion is a likely contributor. If drift appears after preventive maintenance, mounting tension, cable routing, or optical realignment should be reviewed. If values fluctuate during nearby door openings or HVAC transitions, refractive instability and air disturbance become strong suspects.

Another valuable signal is asymmetry. Software logic errors often affect response in a more consistent way, while mechanical or optical issues may appear differently by axis, direction, acceleration profile, or position in travel. A drift event that is stronger in the last 20% of stage travel or after repeated high-speed cycles points toward structure, cable force, support flatness, or motion-induced heat rather than pure code behavior.

Priority checklist for after-sales teams

- Record environmental conditions at the machine, not only facility setpoints; local variation can differ from room specification by meaningful margins.

- Inspect optical surfaces and beam path obstructions before changing compensation parameters.

- Compare cold-start, 1-hour, 4-hour, and end-of-shift readings to identify time-linked drift behavior.

- Review recent mechanical intervention, including bracket torque, stage service, cable replacement, and transport events.

- Check whether instability is axis-specific, location-specific, or load-specific; these patterns often narrow root cause rapidly.

This checklist is valuable because it organizes Laser-Interferometer troubleshooting around physical evidence. In many facilities, adding these 5 steps can prevent multiple unnecessary controller adjustments and reduce repeat visits. It also improves handover quality between field service, internal engineering, and the end user.

Useful standards-aware mindset without overcomplication

Service teams do not need to turn every call into a laboratory study, but they should work with a standards-aware mindset. Where applicable, reference to ISO-aligned measurement discipline, SEMI-style environmental rigor, or IEEE-related instrumentation expectations can help structure discussions. The point is not paperwork; it is consistency. Laser-Interferometer systems respond best when maintenance evidence is collected in a repeatable way.

How maintenance strategy should evolve as precision requirements continue to tighten

Looking ahead, the most important trend is that Laser-Interferometer support will become more preventive, more data-linked, and more interdisciplinary. As precision targets narrow further, the cost of treating drift as an isolated software issue will rise. Teams will need to combine optics care, motion analysis, environmental review, and service documentation into a single response model.

For many organizations, this means adjusting maintenance routines over 3 layers. First, field response should include a defined environmental and mechanical review. Second, periodic service should include contamination control and alignment verification at planned intervals, such as monthly quick checks and deeper quarterly reviews. Third, escalation paths should distinguish between compensation adjustment and root-cause correction, because those are not the same activity.

It also means better coordination between stakeholders. Facilities teams influence thermal and airflow stability. Production scheduling influences machine warm-up behavior. Engineering influences mounting design and acceptance thresholds. Procurement influences spare planning and support scope. Laser-Interferometer stability improves when these functions act on shared evidence instead of isolated assumptions.

A practical decision framework for the next 6 to 12 months

The table below can help service organizations and end users prioritize action as Laser-Interferometer demands become more stringent.

The value of this framework is that it keeps Laser-Interferometer reliability tied to observable conditions, not assumptions. It also supports a more mature service model in which maintenance is not judged only by how quickly software was touched, but by how accurately the physical cause was isolated and corrected.

Why choosing the right technical partner matters

When drift appears, many teams need more than a replacement part or a remote parameter suggestion. They need help confirming whether the issue involves optics, nano-positioning behavior, fluid-induced vibration, contamination pathways, or broader metrology conditions. That is where a multidisciplinary intelligence and benchmarking perspective becomes useful, especially in complex B2B environments where precision systems do not operate in isolation.

At G-UPE, our focus is the frontier of accuracy across coatings, precision pneumatic and fluid control, CMM and multisensory metrology, ultra-high purity chemicals and gases, and micro-manipulation and nano-positioning systems. This makes us a relevant technical discussion point for organizations trying to understand why Laser-Interferometer drift is appearing, how surrounding subsystems may be contributing, and what evidence should shape the next maintenance or procurement decision.

If you are evaluating Laser-Interferometer instability, contact us to discuss parameter confirmation, fault pattern review, product and subsystem selection, expected delivery cycle for critical components, custom diagnostic planning, applicable standards expectations, sample support options where relevant, and quotation communication for broader precision system upgrades. The earlier these questions are clarified, the easier it becomes to reduce downtime and restore stable performance with confidence.

Recent Articles

FILTER_CORE

REF_NO: 0042

Editors' Picks

- 00

0000-00

IEC 63171-3:2026 Adds Vibration-EMC Coupling Test for Piezo Valves - 00

0000-00

FDA Updates Coated Device Guidance: ISO 10993 Data Required for Optical & Pulse-Treated Coatings - 00

0000-00

PSA Singapore Launches Precision Instruments Fast Lane - 00

0000-00

TÜV Rheinland Adopts ISO/IEC 17025:2025 for CMM Calibration