Multidisciplinary Engineering Looks Efficient Until Handoffs Start

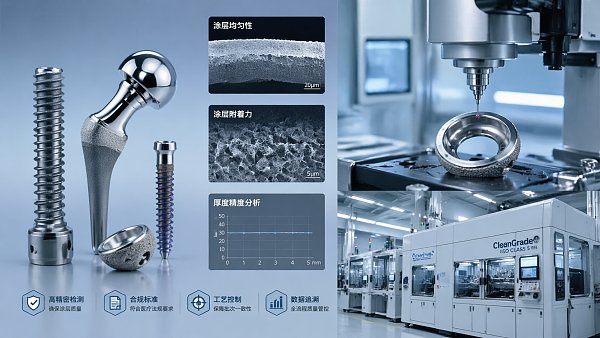

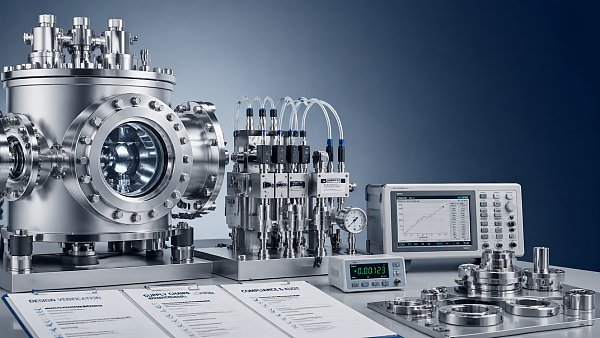

Multidisciplinary Engineering promises speed and innovation, but in Zero-Defect Manufacturing, every handoff can introduce risk. For Aerospace Components, Biological Implants, and Semiconductor Manufacturing, success depends on more than technical excellence—it requires Procurement Intelligence, Regulatory Foresight, and Industrial Integrity. This article explores why fragmented workflows fail and how better coordination protects quality, compliance, and competitive advantage.

For researchers, operators, procurement teams, commercial evaluators, executives, and quality or safety managers, the problem is rarely a lack of expertise inside one discipline. The problem is what happens between disciplines: when coating teams use one tolerance language, metrology teams use another, and procurement compares suppliers on price before checking contamination thresholds, calibration traceability, or export-control exposure.

In ultra-precision environments, a 2-week delay, a 0.5 µm measurement mismatch, or a missing gas purity declaration can disrupt qualification, trigger non-conformance, or force a redesign. The commercial impact can be just as severe as the technical one. A fragmented engineering chain often looks efficient on paper, yet fails when accountability, data continuity, and regulatory readiness are tested under production pressure.

Why Multidisciplinary Engineering Breaks Down at the Handoff Stage

A multidisciplinary model usually combines materials science, process engineering, fluid control, metrology, motion systems, and compliance management. That structure can accelerate early-stage concept work. However, once projects move from design to sourcing, installation, validation, and ongoing control, each handoff becomes a transfer of assumptions. If those assumptions are not standardized, defect risk rises quickly.

In aerospace, implant manufacturing, and semiconductor production, the acceptable error window may be measured in microns, sub-microns, or contamination parts per billion. A supplier may meet one technical requirement while failing another hidden requirement in packaging, material compatibility, or documentation. The result is often not a dramatic system failure, but a chain of smaller misses that compound over 3 to 5 process stages.

The handoff problem usually appears in four forms: specification drift, undocumented process change, non-aligned validation criteria, and delayed risk escalation. When procurement, engineering, and quality review different versions of the same requirement set, suppliers can be approved against incomplete criteria. That is especially common in projects involving ALD precursors, high-purity gases, nano-positioning stages, and multi-sensor CMM workflows.

Typical handoff failures in zero-defect projects

- Drawings specify dimensional tolerance, but omit surface energy, coating adhesion, or outgassing limits.

- Metrology plans define repeatability targets, yet not the calibration interval, environmental control range, or sensor correlation method.

- Procurement compares lead times of 4–8 weeks, but does not map that schedule against qualification time of 2–6 weeks.

- Compliance teams review ISO or SEMI requirements late, after supplier selection has already narrowed options.

The issue is not that specialization is wrong. The issue is that specialization without integration creates blind spots. An ultra-precision pneumatic actuator, for example, may perform well in isolation, but if its response profile is not aligned with the stage controller, chemical exposure limits, and metrology acceptance plan, the final system will underperform despite each component looking compliant on its own.

Where the Operational and Commercial Risks Actually Appear

The most expensive failures are often discovered after installation, not during supplier presentation or technical bid review. At that point, teams are no longer debating brochures; they are dealing with contamination events, unstable measurements, delayed PPAP-like approvals, or missed production ramps. In high-value sectors, one failed handoff can affect uptime, yield, audit readiness, and customer trust simultaneously.

Operational risk is tightly linked to commercial risk. A supplier with a 10-day faster delivery promise may still be the wrong choice if documentation completeness is weak, firmware traceability is unclear, or service coverage is limited to one region. Decision-makers should evaluate not only performance parameters but also lifecycle support across commissioning, recalibration, replacement parts, and regulatory updates over 12–36 months.

Risk map across the engineering chain

The table below shows how common handoff failures translate into measurable exposure for procurement, quality, and operations teams.

The pattern is consistent: the earlier teams surface cross-functional constraints, the lower the downstream correction cost. In many industrial programs, the cost of identifying a mismatch during specification review is a fraction of the cost incurred after installation or validation failure. That is why benchmarked engineering data and synchronized review gates are commercially valuable, not merely technically desirable.

Warning signs decision-makers should not ignore

- Critical specifications are spread across drawings, emails, and supplier quotations rather than one controlled document set.

- Different teams use different revision dates or acceptance thresholds.

- Supplier evaluation focuses on unit cost without scoring validation support, contamination control, and service response time.

- There is no agreed escalation path for deviations found during FAT, SAT, or first article inspection.

For enterprises buying across multiple regions, another risk layer is regulatory drift. Export controls, chemical handling restrictions, and documentation expectations can change faster than annual sourcing plans. Without a live intelligence framework, teams may source a technically valid component that becomes commercially difficult to ship, support, or certify.

How Better Coordination Improves Quality, Compliance, and Procurement Outcomes

The most effective response is not adding more meetings. It is creating one decision framework across the five industrial pillars that typically influence ultra-precision manufacturing: coatings and thin films, pneumatic and fluid control, metrology, ultra-high purity chemicals and gases, and micro-manipulation or nano-positioning systems. When teams evaluate these pillars through shared criteria, handoffs become controlled transitions rather than risky interpretation gaps.

A coordinated framework should define 4 core layers: technical performance, process compatibility, compliance readiness, and lifecycle support. Technical performance covers measurable values such as repeatability, purity, resolution, flow stability, and environmental sensitivity. Process compatibility checks whether each component fits adjacent materials, software, fixtures, and validation routines. Compliance readiness verifies required standards and shipping constraints before purchase orders are released.

Lifecycle support is often underestimated. In high-precision systems, uptime depends not only on the initial specification but also on recalibration intervals, preventive maintenance, spare lead times, and engineering support responsiveness. A stage with nanometer-class resolution can still become a bottleneck if replacement lead time stretches beyond 8 weeks or if calibration support is available only once per quarter.

A practical cross-functional review model

- Stage 1: Requirement locking. Freeze performance thresholds, contamination limits, interface conditions, and document ownership before RFQ release.

- Stage 2: Supplier benchmarking. Compare vendors against ISO, SEMI, IEEE, or internal validation criteria using the same scoring format.

- Stage 3: Pre-qualification testing. Confirm fit under actual operating ranges such as temperature, pressure, vibration, and cleanroom conditions.

- Stage 4: Controlled handoff. Transfer revision-controlled specs, calibration records, and acceptance methods to operations and quality teams.

- Stage 5: Post-installation monitoring. Review drift, maintenance frequency, and deviation events after 30, 90, and 180 days.

Organizations such as G-UPE are valuable in this environment because they connect technical benchmarking with commercial intelligence. That means buyers do not need to evaluate coating data, gas purity profiles, motion-stage capability, and export-control implications as separate conversations. They can assess them as one integrated purchasing and risk-management decision.

This matters especially when different stakeholders ask different questions. Operators want stability and usability. Quality managers want traceability and repeatability. Procurement wants supplier reliability and commercial clarity. Executives want lower risk-to-revenue. A coordinated data model gives each group a view of the same system rather than conflicting snapshots.

What Procurement and Quality Teams Should Evaluate Before Supplier Selection

A strong procurement decision in ultra-precision engineering is not made by checking only price, nominal performance, and lead time. It requires a structured evaluation matrix that translates engineering detail into sourcing confidence. In most cases, 6 evaluation dimensions offer a practical minimum: specification accuracy, standards alignment, contamination control, documentation quality, service coverage, and total implementation risk.

The table below can be used as a sourcing guide across multidisciplinary projects involving metrology equipment, gas delivery, precision motion, coating inputs, and fluid-control subsystems.

This matrix becomes more powerful when procurement assigns weighted scoring. For example, a semiconductor gas input may rank contamination control at 35%, documentation at 25%, logistics continuity at 20%, price at 10%, and installation support at 10%. A nano-positioning subsystem may invert those weights by giving more emphasis to control integration, dynamic stability, and recalibration capability.

Questions buyers should ask before issuing a final PO

Technical and operational checks

- Is the stated tolerance or purity level verified under the same conditions used in our production line?

- What is the recommended maintenance or recalibration interval: monthly, quarterly, or annually?

- Are replacement parts available within 7–21 days, or are critical components tied to longer import cycles?

- Can the supplier provide traceable test records for incoming inspection and future audits?

Quality and safety teams should also assess failure visibility. If a deviation occurs, will the system trigger immediate alarms, gradual drift indicators, or no warning at all? In zero-defect programs, early visibility can protect yield more effectively than a slightly better nominal performance figure.

Implementation Blueprint: Turning Fragmented Workflows into Controlled Delivery

To improve handoff reliability, companies need a documented implementation sequence rather than informal coordination. A practical blueprint can usually be deployed in 5 steps over 4–12 weeks, depending on supplier count, regulatory complexity, and system criticality. The goal is not bureaucracy. The goal is repeatable transfer of intent, data, and accountability.

Five-step implementation path

- Map the engineering chain. Identify every handoff from material specification to final acceptance, including owner, input, output, and approval timing.

- Standardize the critical data set. Create one controlled template for tolerances, purity limits, metrology method, environmental range, and regulatory notes.

- Benchmark suppliers against shared criteria. Use one scorecard for all teams instead of separate engineering, sourcing, and quality checklists.

- Run pilot validation. Test 1 or 2 representative workflows before rolling out to all product families or plants.

- Review and improve. Track non-conformance rate, deviation closure time, and supplier response speed every quarter.

A pilot approach is especially useful when operations span multiple technologies. A plant may not need to redesign its entire sourcing model at once. It can begin with one high-risk chain, such as thin-film deposition plus metrology, or gas handling plus motion-stage integration, then extend the framework once early results confirm lower rework and faster approvals.

Teams should define at least 3 acceptance layers during implementation: engineering acceptance, quality acceptance, and commercial acceptance. Engineering confirms performance fit. Quality confirms traceability, calibration, and deviation control. Commercial confirms delivery continuity, service terms, and regulatory readiness. Without all 3 layers, the handoff remains incomplete even if the equipment appears operational.

Common implementation mistakes

- Treating supplier onboarding as a paperwork task rather than a system-integration step.

- Approving components before metrology and maintenance teams review service implications.

- Using one-time qualification data without planning periodic revalidation every 6–12 months.

- Assuming global availability when regional export or transport controls can alter supply routes.

When implemented correctly, this blueprint improves more than compliance. It supports faster root-cause analysis, cleaner supplier communication, and stronger executive visibility. In competitive sectors, that can preserve launch schedules and protect customer commitments even when technical complexity increases.

FAQ for Researchers, Operators, Buyers, and Decision-Makers

How do we know a multidisciplinary workflow is becoming too fragmented?

The clearest signs are repeated clarification loops, inconsistent revision control, and rising deviations at transfer points rather than during core processing. If teams spend more than 2–3 review cycles aligning the same requirement, or if incoming inspection disputes become frequent, the workflow likely lacks a shared decision framework.

Which handoffs deserve the most scrutiny in ultra-precision manufacturing?

Priority should go to any transition involving contamination sensitivity, measurement interpretation, or regulatory exposure. In practice, that includes coating-to-process interfaces, gas or chemical sourcing, metrology acceptance, and controller-to-motion integration. These are the points where small mismatches can create large downstream cost.

What is a realistic supplier evaluation timeline?

For high-risk components or systems, 2–4 weeks is common for document review and technical comparison, with another 2–6 weeks for sample validation, FAT preparation, or compliance confirmation. Complex international programs may take longer if shipping controls, cleanroom packaging, or multi-site approval are involved.

Can price-first sourcing work in a zero-defect environment?

Only in narrow, low-risk categories. In precision manufacturing, a lower purchase price can be offset quickly by requalification, extra calibration, process instability, or delayed startup. Total cost should include support quality, lead-time resilience, documentation accuracy, and maintenance burden across the first 12 months of operation.

Multidisciplinary engineering remains essential for modern industry, but it becomes truly efficient only when the handoffs are engineered with the same rigor as the components themselves. For organizations operating at the frontier of accuracy, the winning model combines verified technical data, cross-functional supplier benchmarking, and forward-looking regulatory awareness.

G-UPE supports that model by connecting buyers and technical teams to benchmarked insight across coatings, fluid control, metrology, ultra-high purity chemicals and gases, and nano-positioning systems. If your organization needs more resilient supplier selection, tighter validation logic, or better coordination across zero-defect workflows, now is the right time to review your handoff strategy. Contact us to discuss your application, request a tailored evaluation framework, or explore more precision engineering solutions.

Taglist:

- Ultra-Precision Engineering

- Thin-Film Deposition

- Precision Pneumatic

- Fluid Control

- Ultra-High Purity Chemicals

- Micro-Manipulation

- Nano-Positioning

- ALD Precursors

- Precision Manufacturing

- Technical Benchmarking

- Regulatory Foresight

- Procurement Intelligence

- Export Control

- Industrial Integrity

- Multidisciplinary Engineering

- Semiconductor Manufacturing

- Biological Implants

- Aerospace Components

- Zero-Defect Manufacturing

Recent Articles

FILTER_CORE

REF_NO: 0042